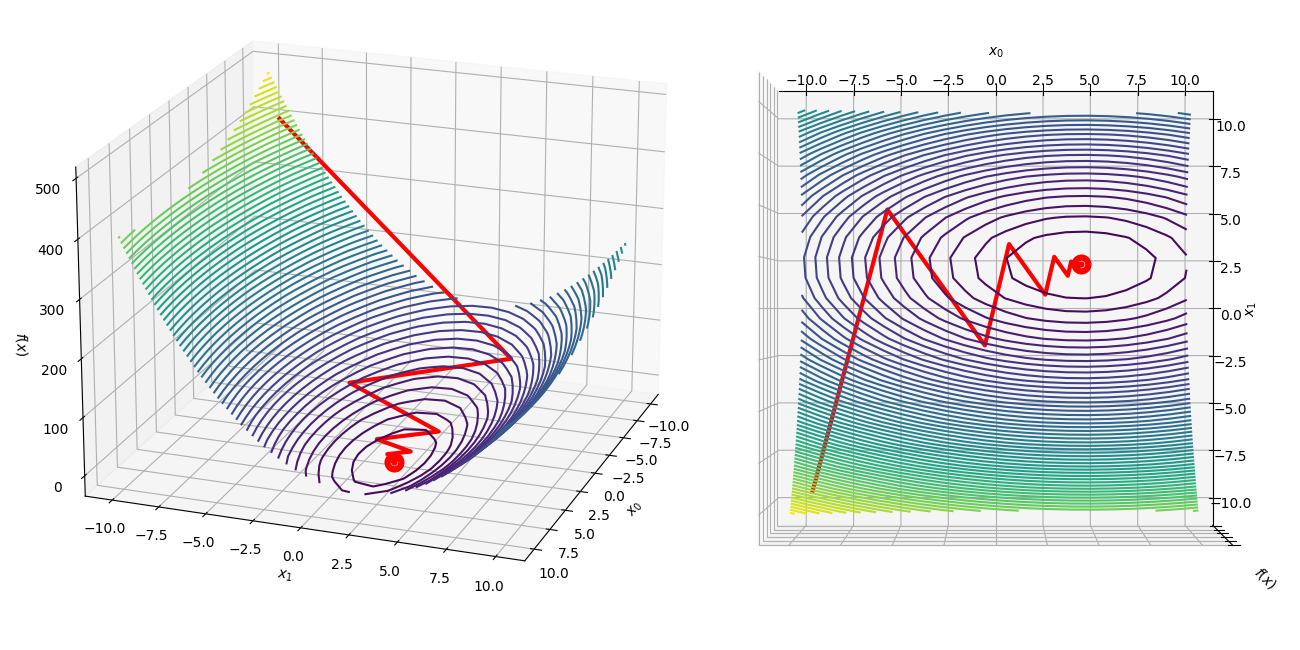

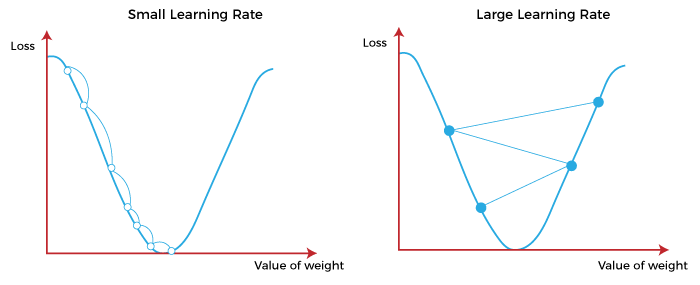

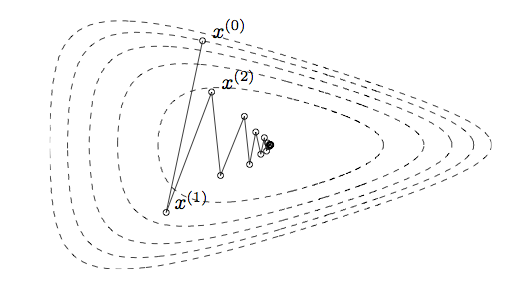

Visualizing the gradient descent method

Por um escritor misterioso

Last updated 01 abril 2025

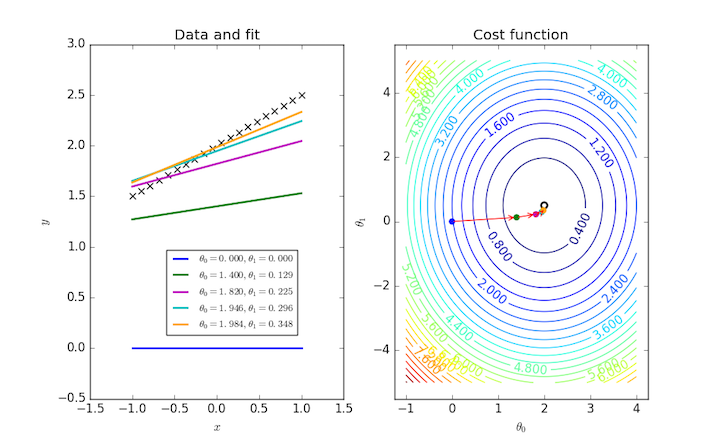

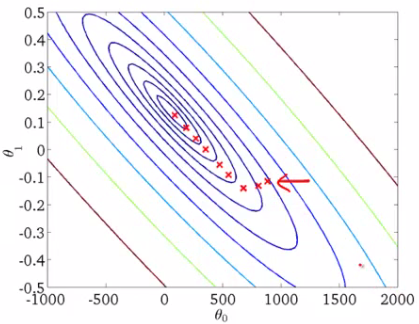

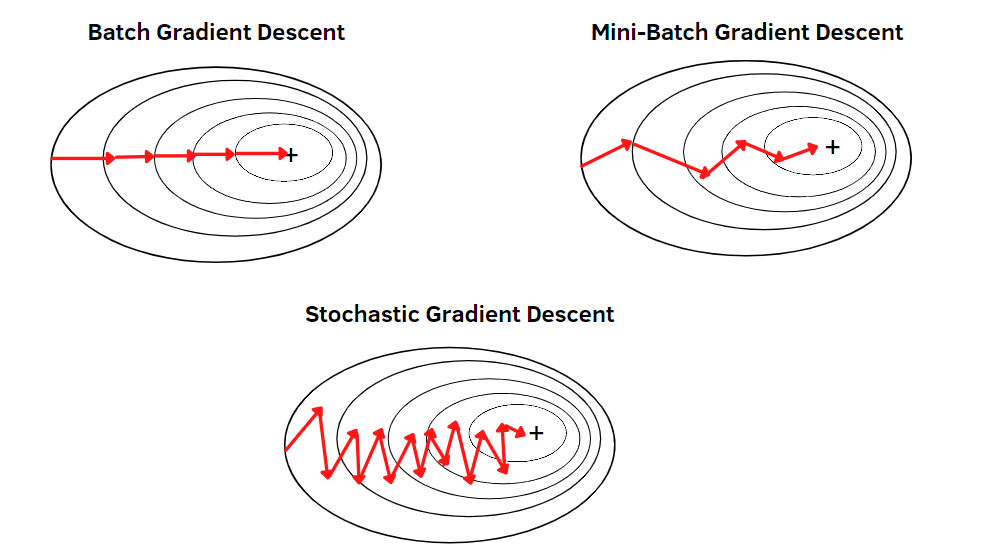

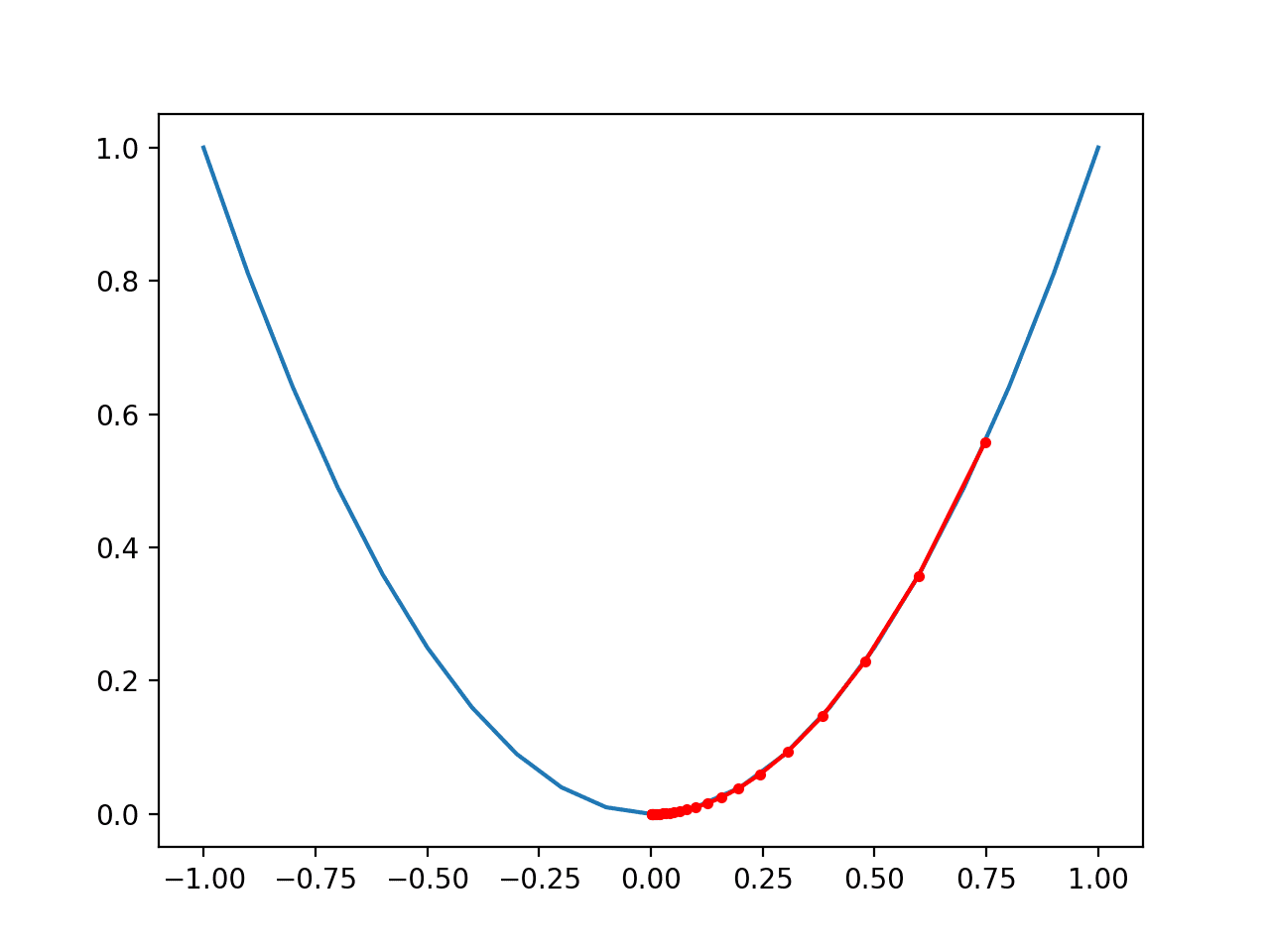

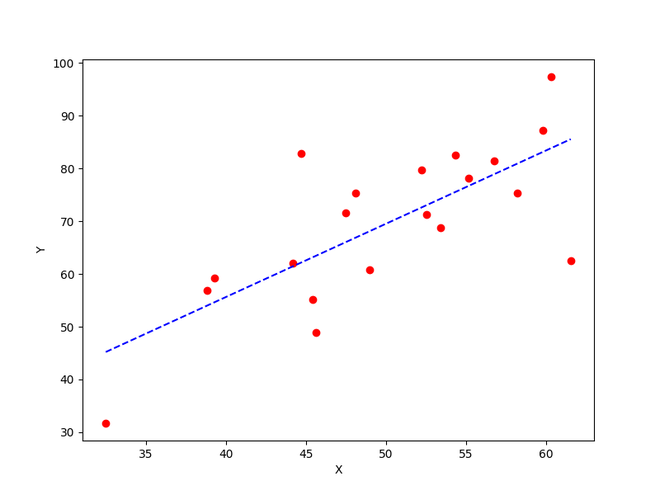

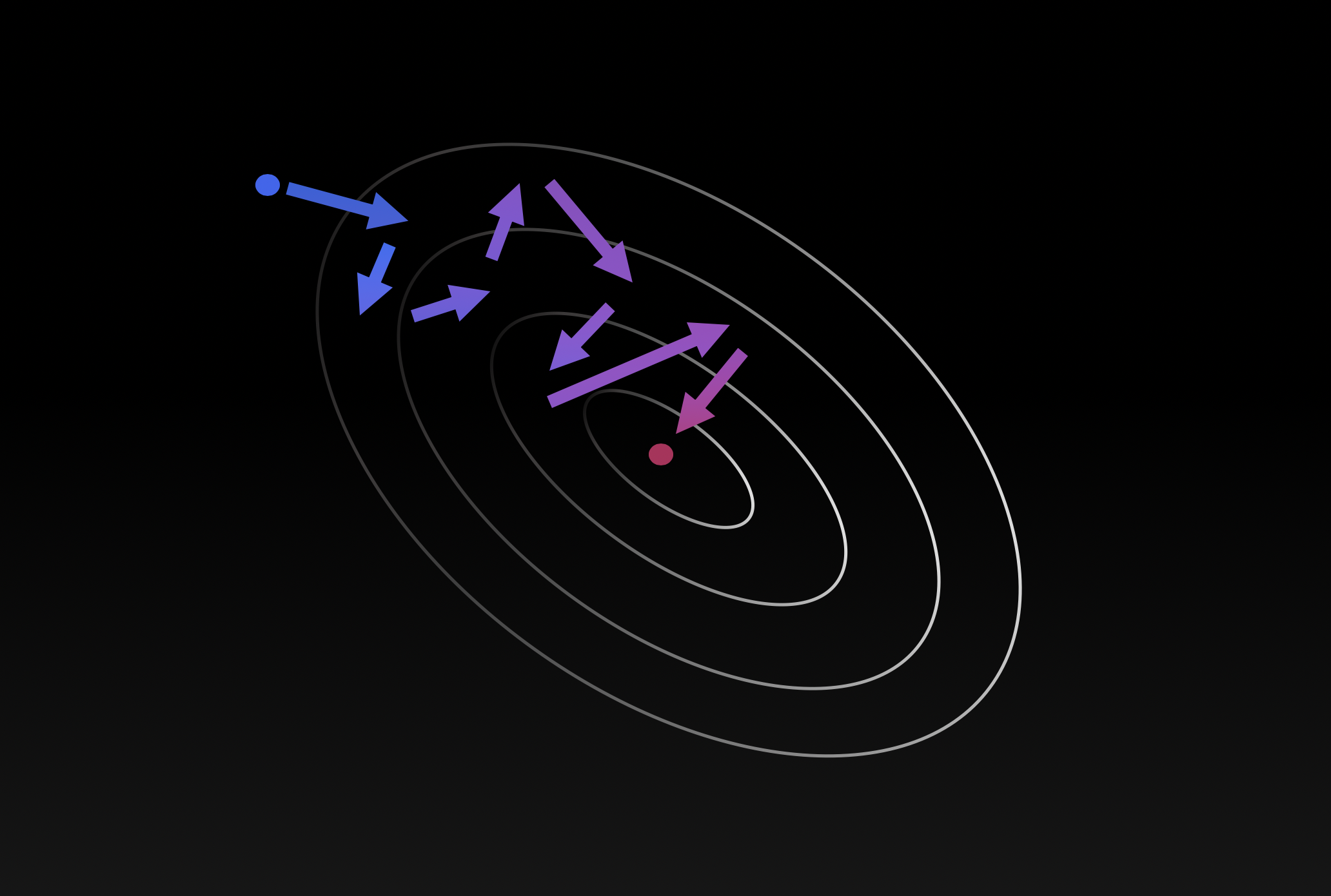

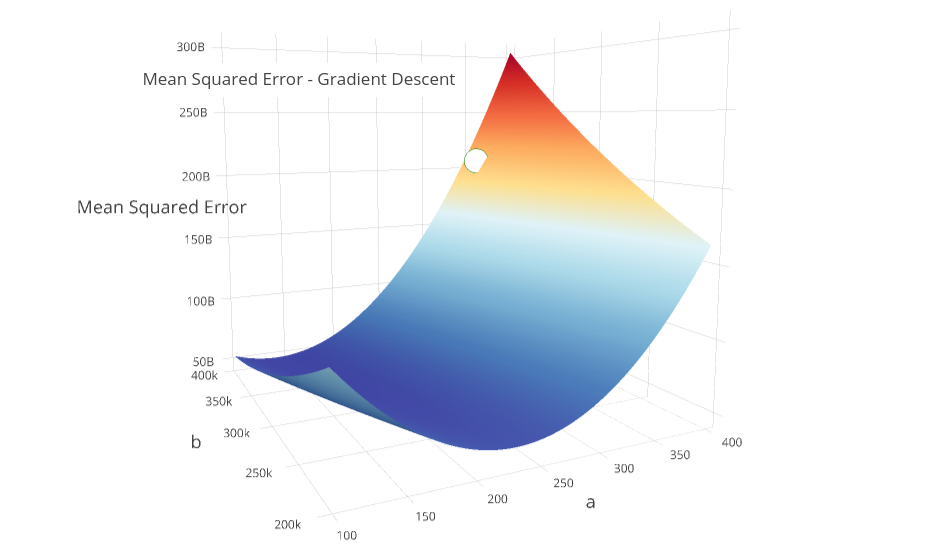

In the gradient descent method of optimization, a hypothesis function, $h_\boldsymbol{\theta}(x)$, is fitted to a data set, $(x^{(i)}, y^{(i)})$ ($i=1,2,\cdots,m$) by minimizing an associated cost function, $J(\boldsymbol{\theta})$ in terms of the parameters $\boldsymbol\theta = \theta_0, \theta_1, \cdots$. The cost function describes how closely the hypothesis fits the data for a given choice of $\boldsymbol \theta$.

Gradient Descent Algorithm in Machine Learning - Analytics Vidhya

Visualizing the vanishing gradient problem

Gradient Descent and its Types - Analytics Vidhya

Gradient Descent With Momentum from Scratch

Gradient Descent Visualization - File Exchange - MATLAB Central

How to implement a gradient descent in Python to find a local minimum ? - GeeksforGeeks

What is Gradient Descent? Gradient Descent in Machine Learning

What is meant by gradient descent in laymen terms? - Quora

ZO-AdaMM: Derivative-free optimization for black-box problems - MIT-IBM Watson AI Lab

The Gradient: A Visual Descent

Recomendado para você

-

Implementing the Steepest Descent Algorithm in Python from Scratch, by Nicolo Cosimo Albanese01 abril 2025

Implementing the Steepest Descent Algorithm in Python from Scratch, by Nicolo Cosimo Albanese01 abril 2025 -

Method of Steepest Descent -- from Wolfram MathWorld01 abril 2025

-

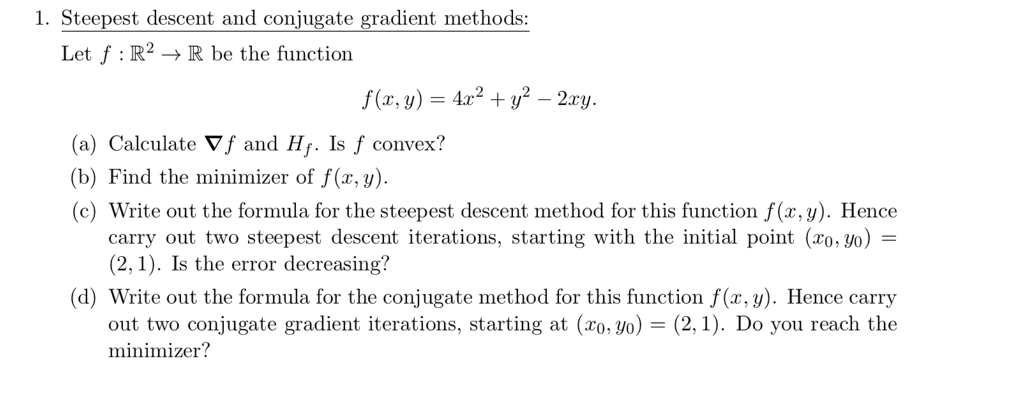

Solved 1. Steepest descent and conjugate gradient methods01 abril 2025

Solved 1. Steepest descent and conjugate gradient methods01 abril 2025 -

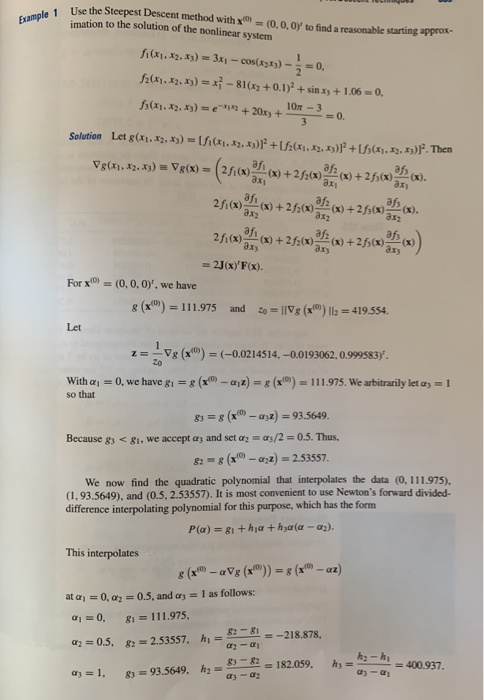

Solved (b) Consider the nonlinear system of equations z +01 abril 2025

Solved (b) Consider the nonlinear system of equations z +01 abril 2025 -

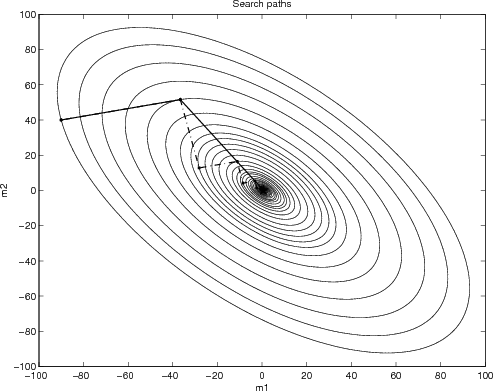

Why steepest descent is so slow01 abril 2025

Why steepest descent is so slow01 abril 2025 -

nonlinear optimization - Do we need steepest descent methods, when minimizing quadratic functions? - Mathematics Stack Exchange01 abril 2025

nonlinear optimization - Do we need steepest descent methods, when minimizing quadratic functions? - Mathematics Stack Exchange01 abril 2025 -

I want to find x solution using Steepest Descent by Python. But when I was running it, it said invalid syntax at def. what should I fix about? - Stack Overflow01 abril 2025

I want to find x solution using Steepest Descent by Python. But when I was running it, it said invalid syntax at def. what should I fix about? - Stack Overflow01 abril 2025 -

Gradient Descent in Machine Learning - Javatpoint01 abril 2025

Gradient Descent in Machine Learning - Javatpoint01 abril 2025 -

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://d3i71xaburhd42.cloudfront.net/a0174a41c7d682aeb1d7e7fa1fbd2404e037a638/11-Figure8.1-1.png) PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning01 abril 2025

PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning01 abril 2025 -

gradient-descent-backtracking.png01 abril 2025

gradient-descent-backtracking.png01 abril 2025

você pode gostar

-

Face reveal, Face Reveal01 abril 2025

Face reveal, Face Reveal01 abril 2025 -

Zoo Tycoon 2: Ultimate Collection Images - LaunchBox Games Database01 abril 2025

Zoo Tycoon 2: Ultimate Collection Images - LaunchBox Games Database01 abril 2025 -

simbolo de roblox png01 abril 2025

simbolo de roblox png01 abril 2025 -

SONHAR COM RATO: qual o significado bíblico desse sonho?01 abril 2025

SONHAR COM RATO: qual o significado bíblico desse sonho?01 abril 2025 -

Deadpool 3 Recreates 'Star Wars: Return of the Jedi' Luke-Vader Scene01 abril 2025

Deadpool 3 Recreates 'Star Wars: Return of the Jedi' Luke-Vader Scene01 abril 2025 -

Mangá² #253 – Yagate Kimi ni Naru – AoQuadrado²01 abril 2025

Mangá² #253 – Yagate Kimi ni Naru – AoQuadrado²01 abril 2025 -

Supremo em 2020, anime girl dark supremo Papel de parede de celular HD01 abril 2025

Supremo em 2020, anime girl dark supremo Papel de parede de celular HD01 abril 2025 -

![Pre-order] Shin Ikki Tousen - Kanu Unchou(Bunny Ver. – Nekotwo](https://nekotwo.com/cdn/shop/products/Nekotwo-_Pre-order_-Shin-Ikki-Tousen---Kanu-Unchou_Bunny-Ver.-2nd_-1-4-Scale-Figure-FREEing-1675001320_1024x1024.jpg?v=1675001322) Pre-order] Shin Ikki Tousen - Kanu Unchou(Bunny Ver. – Nekotwo01 abril 2025

Pre-order] Shin Ikki Tousen - Kanu Unchou(Bunny Ver. – Nekotwo01 abril 2025 -

Plowing on Steam01 abril 2025

Plowing on Steam01 abril 2025 -

Hard Way Quotes - BrainyQuote01 abril 2025

Hard Way Quotes - BrainyQuote01 abril 2025